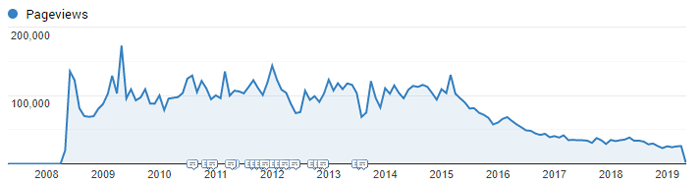

Twelve years ago, a group of us founded LessThanDot, a site to help us share technical content and advice with the community. We were successful, helping thousands of folks find new solutions, sidestep issues, or upgrade skills. Totalling 2,300 blog posts, we saw 100,000 views/month, with 25 individual posts that received over 100,000 views each (and 3 over 1,000,000).

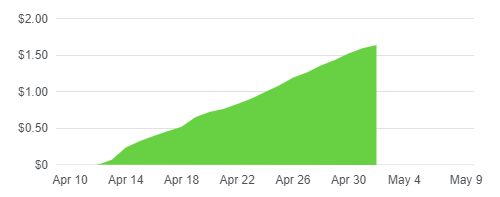

We didn't profit financially from the site. We ran some light google ads at one point and accepted donations, but the majority of that came from our own pockets. After 12 years our posting has trickled off, but the content still attracts 30,000 views/month.

Which brings us to a few months ago: how do we continue to host the content and help folks, without the monthly bill of a wordpress setup?

The answer was migrating the content to a static site generator and hosting it in Azure, using a combination of cheap storage (Azure Blob Storage) and CDN to support the content at 1/25th of the cost.

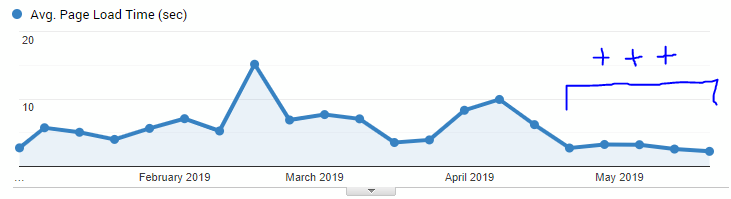

The results are $2/month for 17,000 users/month, faster page response times, free SSL certificates, and automatic deploy in 4 minutes.

If you're considering a similar move, whether to archive content or simply shift off wordpress, here's how we did it.

The Conversion Process

The first step was selecting a set of tools.

Selecting Hugo

I reviewed several static site generators, but ultimately chose hugo because it had one of the better wordpress migration paths. This also ended up being helpful when it came time to iterate on the site implementation, as it could rebuild a 2,300 blog post site in seconds rather than minutes.

The plugin that swayed me to this path was the Wordpress to Hugo Exporter plugin.

Selecting Azure

I have familiarity with Azure and AWS, and either could have served as a solution. In this case, I ultimately chose Azure only because I am actively spending money on my personal Azure subscriptions and my AWS ones are still running completely free. I estimated the monthly cost would be $1-$2 and didn't want to add another bill to my personal accounting.

Prepping Wordpress

The first few runs of the Plugin above resulted in timeouts and then small messes. The ingredients to a successful export ended up being:

- Download the plugin and add it to the wordpress plugins folder

- Modify the plugin to allow for unlimited memory and timeout

- Turn off plugins that manipulate post content (server-side code highlighting, for instance)

- Modify how the plugin handles

<pre>tags for how we defined codeblocks

1. Downloading the plugin

The plugin is available here. Follow the normal installation process.

2. Unlimited Resources

To modify the plugin for unlimited memory and timeout, go to hugo_export.php line 320, and

add this to the export() method:

ini_set('memory_limit', -1);

ini_set('max_execution_time', 0);Note: even with this setting, our poor little host couldn't manage to export all of the content, so I actually performed the export on a local VM as we have a vagrant setup that allows us to run a production-like host locally.

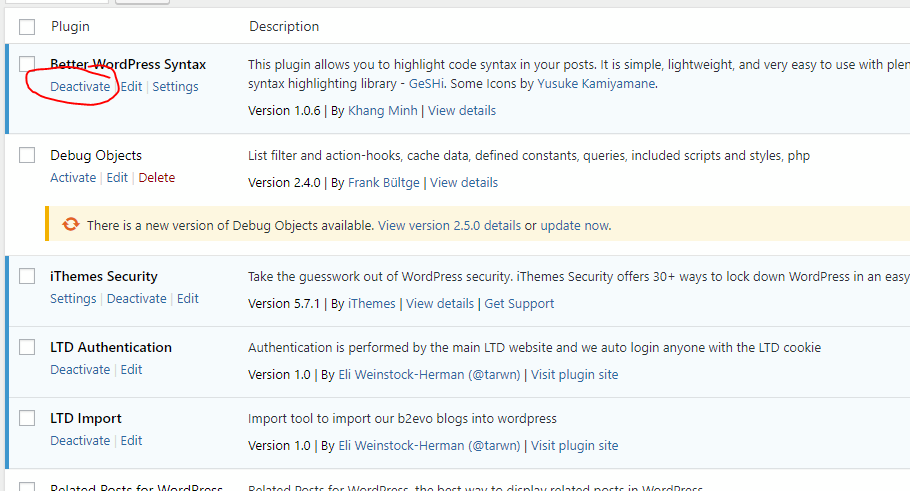

3. Turn off conflicting plugins

A few test exports showed that a couple of our plugins were pre-processing content from the posts. One of the biggest ones was the code highlighting plugin, that would pre-render code blocks into highlighted HTML and would have forced me to bring across all the CSS and tooling to support that.

Disabling this plugin was an easy answer, leaving us with some slightly non-standard code blocks in the post markup:

<pre lang="whatever">

...

</pre>4. Customize the exporter plugin

Due to the format of the code blocks in #3, some alterations were necessary to

the exporter. It could handle code blocks that were formatted with <pre><code lang="">..</code></pre>

style, but not the one we had.

There are two changes, registering an optional lang attribute on the <pre> tag and

generating code fences/blocks with that lang in the export.

In wordpress-to-hugo-exporter-master/includes/markdownify/Converter.php, alter the

pre like so (line 107):

from

'pre' => array(),to

'pre' => array(

'lang' => 'optional',

),then later in the file, in the handleTag_pre() function, insert this at the top (line 1043):

if($this->keepHTML){

if ($this->parser->isStartTag) {

$this->stack();

if (isset($this->parser->tagAttributes['lang'])) {

$lang = $this->parser->tagAttributes['lang'];

$this->out("```" . $lang . "\n", true);

$this->buffer();

return;

}

} else {

$tag = $this->unstack();

if (isset($tag['lang'])) {

$this->out(str_replace('<', '&lt;', str_replace('>', '&gt;', $this->unbuffer())));

$this->out("```\n", true);

return;

}

}

}This outputs a code fence with the contents of the lang attribute when it runs into an open <pre> tag,

replaces a few key ampersands, then outputs the end fence.

Note: I later found a few mangled fences or fences without carriage returns and had to fix them manually, so this is 98% accurate but has a flaw somewhere

As one last change, in wordpress-to-hugo-exporter-master/hugo-export.php I added the post Id to the

list of fields that would be added to the front matter on the exported markdown files in case I had to

find a post by it's original Id later (line 128 in the $output array at top of convert_meta() function):

'ID' => $post->ID,Post-processing

The export still required some clean-up, some of which was immediately obvious and some I'm still finding on a case-by-case basis.

- The exported images make git break

- Wordpress replaces basic quotes with unicode ones

- Broken code fences

- URLs in Azure Storage/S3 are case sensitive

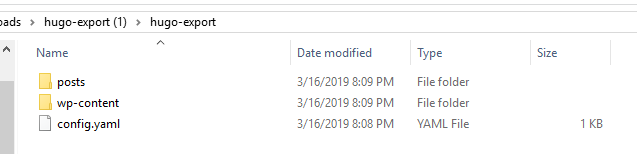

1. Exported images too large for git

When the export is complete, you will have a folder of posts and wp-content:

I found that our wp-content folder was large enough to break git consistently, so I ultimately chose to manually upload this folder to Azure blob storage and only brought the posts folder into my hugo setup (covered in the next section).

Because of #4, you should also ran a script to lowercase all of the image files and folders in wp-content prior to upload. I didn't do this at first, started manually renaming them, then later just ran a script and uploaded the duplicates (fractions of a cent/month in storage costs).

Using Powershell, here's lowercasing everything:

Get-ChildItem -Path ".\wp-content\*" -Recurse | % { if ($_.Name -cne $_.Name.ToLower()) { ren $_.FullName ($_.Name.ToLower() + ".inprog"); ren ($_.FullName + ".inprog") $_.Name.ToLower(); } }And then using Azure CLI, here's uploading the wp-content folder to the $web container that I'll

be hosting from:

az storage blob upload-batch -s wp-content -d "`$web" --connection-string "CONNECTION STRING HERE" --destination-path "wp-content" --dryrun2. Wordpress replaces basic quotes with unicode ones

Single quotes, double quotes, dashes, ellipses...all of these conspired to come out the other side of hugo in the usual unicode mess. I chose to manually revert them back to basic ASCII characters by using the search and replace functionality in vs code.

One warning: I found that when operating across this number of files, vs code would get slower and slower. "Save All" often, search again for the offending character after you "successfully" finished replacing it, and restart vs code every couple replaces if you have similar problems.

It's not pretty, but it got the job done.

3. Broken code fences

I found a few cases where code fences ended in </pre> or had somehow come out without carriage returns. These

were consistent enough that I could use the search and replace like the prior step to fix up the markdown.

Alternately, we had a couple language codes (liketsql) that were not supported by highlight.js, so

I used search + replace to swap them out with codes that were supported (sql).

4. URLs in Azure/S3 are case sensitive

The metadata for posts will include the lowercase permalink in a url property, so when we hook up hugo

it will produce a post in that location.

However, nothing standardized the way we typed in URLs to link to those posts, we had plenty of alternate categories, and category names were not lowercase in Wordpress. So we had a bit of a mess.

Later on, I'll configure the CDN to force incoming request URLs to lowercase, which helps with part of this problem.

Setting up Hugo

For this part, the easy stuff is already documented in the hugo setup instructions, and the hard part is creating a custom theme to match your website. I haven't found the hugo documentation to be wonderful for this. It has a lot of detail, but either I think sufficiently differently than the authors or it spends a lot of time on the what and not enough on how to get from here to there.

Once you have hugo set up, you'll want to copy your posts from the export into the content folder.

You can see the hugo implementation here: lessthandot-hugo.

Note: You don't need to subfolder hugo like I did (top-level blogs folder), I was trying several things, then got too far along to want to undo the folder structure

Hosting in Azure

Assuming you can connect the dots from raw post files in Hugo to a decent theme, the next step is to set up our "hosting". This includes Azure storage to hold the actual content and a CDN that will enable us to apply some rules to incoming requests to map to our much stricter storage system. Then we need to be able to deploy changes on demand.

- Setup Azure Blob and CDN

- Setup CircleCI for deployment

1. Setup Blob and CDN

For the initial setup, you can follow this excellent guide

You now have blob storage and a CDN, with rules to append /index.hmtl where needed.

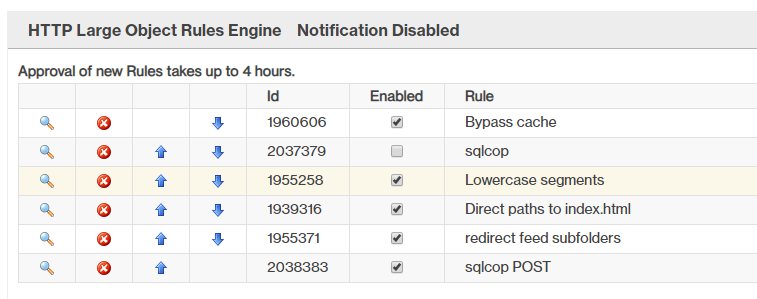

I also added a separate rule to lowercase all incoming URLs:

IF [Always]

[URL Rewrite]

Source [/#########/lessthandot/] [(.*)]

Destination [/#########/lessthandot/] [$L1]I also added rules for a couple other cases I found in the 404 logs, like sending folks hitting the wordpress RSS feeds to alternative URLs.

Note: They are not kidding about rules taking 4 hours to take effect, or content taking some time to work properly (and returning an error instead of a passthrough during that period)

One last rule that was useful was adding a bypass rule so I could bypass the cache when I was testing URLs, instead of constantly purging and waiting for content to be loaded:

IF [URL Query Parameter]

Name [bypass] [Matches] Value(s): [yes]

[Bypass Cache] [Enabled]Now if I visit a URL and add a querystring of ?bypass=yes, I bypass the CDN content and pull directly

from the underlying origin (blob storage).

Compression For the compression, the instructions are unclear and will give you errors for some of the MIME types that they say are supported. I found this set works fine, though:

File Types:

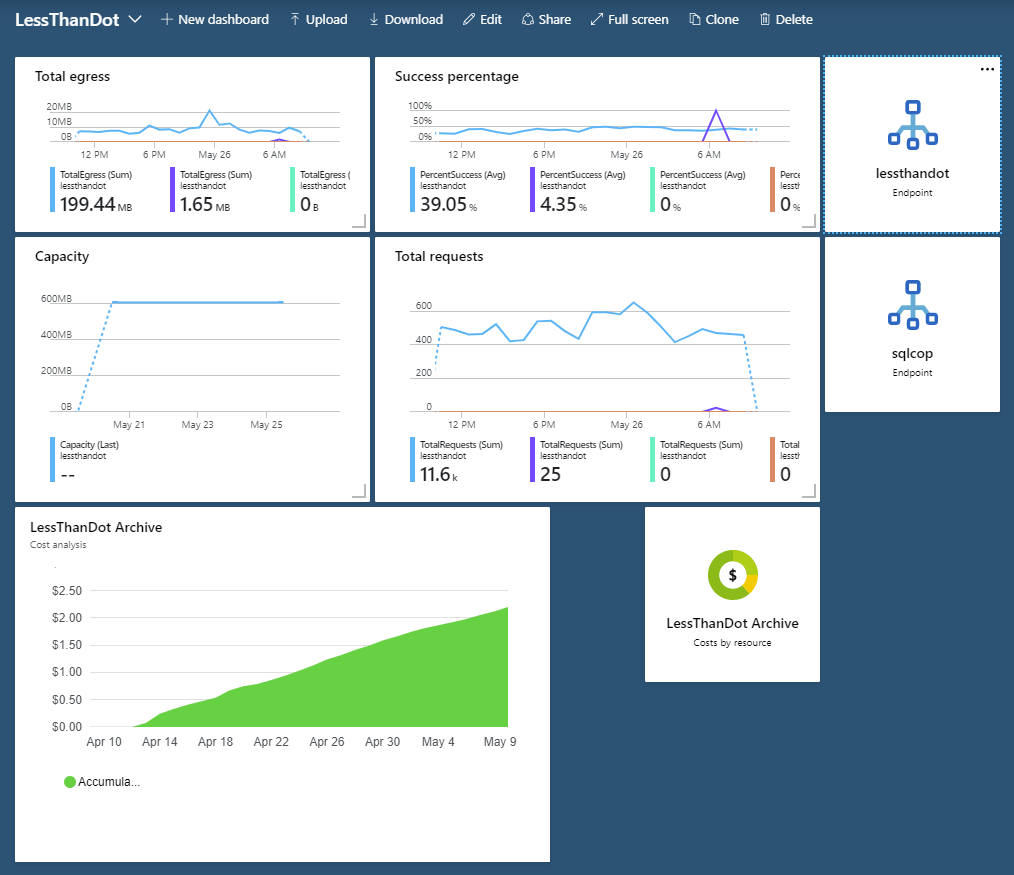

text/plain,text/html,text/css,text/javascript,text/xml,application/javascriptMonitoring Costs Finally, I created a custom dashboard specifically for LessThanDot so I could monitor costs and usage:

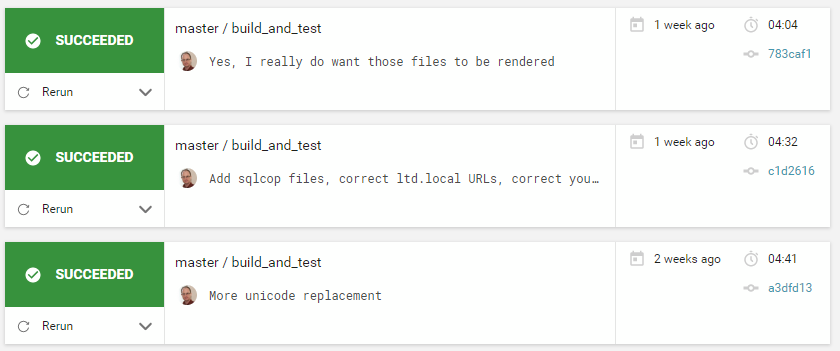

2. Setup CircleCI for deployment

I could have deployed manually from my local machine, using a similar Azure CLI command as I did for uploading images, but I'm approaching 2 decades of bad experiences with manual deployment and trying to find the right command to dangerously manually deploy, so I decided to let CircleCI do the work for me.

There are two main ingredients:

- A custom Docker image with hugo and Azure CLI

- The CircleCI config file

The docker image is available form Docker Hub.

And here is the .circleci/config.yml:

version: 2.1

jobs:

build:

docker:

- image: tarwn/hugo-azurecli:latest

working_directory: ~/project

steps:

- checkout

- run:

name: "Run Hugo"

command: |

cd ~/project/blogs

hugo --config config-prod.toml -v

# ... commented out junk ...

- run:

name: Deploy to Azure Blobs

command: |

az storage blob upload-batch --source ~/project/blogs/public --destination \$web --connection-string ${AZURE_STORAGE_CONNECTIONSTRING}

workflows:

version: 2

build_and_test:

jobs:

- build:

filters:

branches:

only:

- master

- testNow when I push a new commit to master, CircleCI picks it up, runs a hugo build with the production configuration (which includes the google analytics key), and re-uploads the content to Azure.

Note: This does not restrict the upload to changed files, it uploads everything. Which has added $0.25 to $0.50 in storage costs as I iterated on content cleanup

Tracking 404s

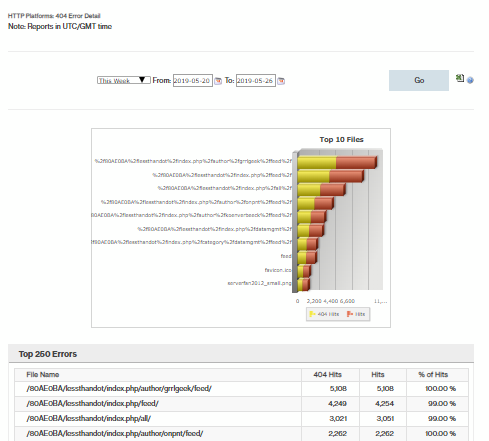

I am currently tracking 404's in two places: the CDN and Google Analytics

CDN

Go to Analytics, Advanced HTTP Reports, HTTP Large Platform, By 404 Errors:

The chart is not that useful, but the breakdown of URLs is. Generally I am seeing URLs for various RSS feeds that are no longer present, some PHP pages, some category listing pages that don't exist, etc. Earlier, I would see missing stylesheets, scripts, and so on that I wanted to know about and fix in my hugo theme.

Google Analytics

I've created a custom report that tracks the original URL that a user was going to when they end up at the 404 page. This is higher quality than the CDN report when I'm looking for missing post URLs.

Post URLs that 404

Generally, the 404s for post URLs are due to posts belonging to multiple categories in Wordpress, which meant that depending on how you got to the post (or if it was edited later), it could legitimately have responded to another URL at some point, been copied to a post or StackOverflow answer somewhere, etc.

The best solution for these is to add them as aliases (all lowercase, don't forget) in the post file. Hugo will then generate redirects to redirect to the permalink for the post from that alternative URL.

Example:

---

title: Don't start your procedures with SP_

author: George Mastros (gmmastros)

type: post

date: 2009-11-04T13:16:28+00:00

ID: 609

url: /index.php/datamgmt/dbadmin/mssqlserveradmin/don-t-start-your-procedures-with-sp_/

aliases:

- /index.php/datamgmt/dbprogramming/mssqlserver/don-t-start-your-procedures-with-sp_/And here's the alias URL.

Result: < $2/month

We're currently spending around $2/month to support blog traffic, added a free SSL certificate along the way (Azure CDN option), and everything loads faster. Theoretically we could continue writing new posts with this static site, minus the wordpress tools, and add in somethign like Disqus for comments (or build our own with a couple Azure Functions).

Hopefully this helps someone else contemplating the same journey. It did take some work to glue together information from different sources. Azure's CDn was the most frustrating piece of the puzzle, followed by hugo, and then vs code's search + replace. Google Analytics, Circle CI, Azure blob storage, and Azure billing were all painless.

If I've left anything out, let me know below!