Table of Contents

- Why Terraform?

- Learning the Terraform Basics

- Target Architecture

- Terraform Configuration

- Issues/Friction

In this post, I'm outlining a simple architecture I've used as the basis for several small B2B apps, and I'm defining and deploying that infrastructure with Terraform. The next post will integrate this into a Continuous Delivery pipeline via CircleCI. With this post, I'm focusing on the actual configuration, the Terraform azurerm resourves (with lots of links), and some of the hiccups I ran into.

Why Terraform?

I've built several small and early-scale B2B apps in Azure. They don't have high infrastructure requirements, so I generally manage that infrastructure manually through the CLI or portal. The first friction points in these environments tend to be managing an increasingly larger set of configurations and relying on discipline to phase resource changes out through multiple environments and steps.

This project is intended to be used to experiment with. I don't want to pay to keep it running all the time and I want to experiment with some alternative starting methods to see Infrastructure-as-Code provides benefits from the beginning for a project at this scale, or introduces more friction than goodness. I initially started on Pulumi, but switched over to Terraform.

Learning the Terraform Basics

If you haven't used Terraform, I'd highly recommend running through one of the example tutorials: https://learn.hashicorp.com/terraform.

The ones I've looked at are well-written, and walking through one will give you a good set of fundamentals on the files and commands used later in the post.

This post won't cover running terraform commands or the basics, only the specifics of defining a basic Azure environment in Terraform.

Target Architecture

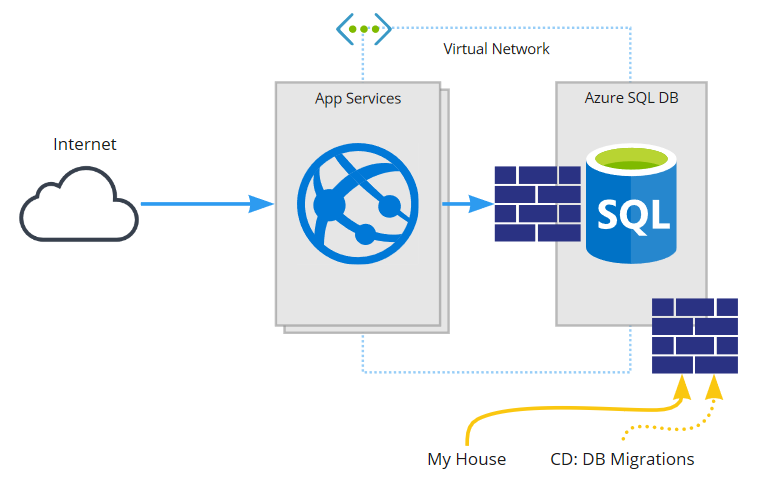

The target architecture for this application will look something like this:

This application will be:

- a React Single Page App, hosted and talking to...

- an ASP.Net Core (.Net 5) back-end, on Azure App Services (via blue/green deploy), talking to...

- an Azure SQL Database, secured via...

- a firewall limiting access to a VNet (that the application uses), some IP rules (my house), and dynamic rules for data migrations during deploys

This is a relatively small setup, but a good test case to test whether starting with Terraform (vs manually) would push out some of the friction until a later stage of growth or pull in more pain than it's worth in the early days.

Terraform Configuration

The sample application for this post is located here: https://github.com/tarwn/example-lob-app

The application is still in progress, but you can see several top-level folders for the main concerns:

For this post, we're mostly concerned with the "infrastructure" folder, which contains the terraform configurations:

Here is a quick run down of the relevant files:

- inputs.tf: the input vars to be provided, which includes a couple secret values that are not defaulted or committed

- main.tf: the main configuration of the infrastructure

- firewall_rules.tf: additional firewall rules (my house) so they can be managed in git/tf

- outputs.tf: the outputs we want - names and id's of resources that will be used by the Continuous Delivery pipeline

Azure Auth for Terraform

Locally, we need Azure CLI and Terraform installed and to be logged in via az login.

Remotely (CircleCI), we need a docker image that has both Terraform and Azure CLI, and we'll create a Service Provider for auth (examples in this Terraform tutorial or this Microsoft one) and add the values as environment variables for the build.

Main Configuration

Github file located here: /infrastructure/main.tf (github)

Configure the backend

# Configure the Azure provider

terraform {

backend "azurerm" {

resource_group_name = "terraform-rg"

storage_account_name = "lobterraformstatestor"

container_name = "stateprod"

key = "dev.terraform.tfstate"

}

required_providers {

azurerm = {

source = "hashicorp/azurerm"

version = "~> 2.65"

}

}

required_version = ">= 0.14.9"

}I configured the terraform backend state to use Azure Blob Storage (azurerm terraform backend). This is a secondary Azure Resource Group and Storage Account used only for infrastructure. This was setup via the portal, and I manually created the named container above.

Initially, I plan to gate the deployment process and not actually apply infrastructure changes from CircleCI. If there are outstanding infrastructure changes, I want the build to fail until I apply the changes and re-run it.

The Provider and Resource Group

provider "azurerm" {

features {}

}

# Resource Group

resource "azurerm_resource_group" "rg" {

name = var.resource_group_name

location = var.location

}Add the Azure RM provider and create a new resource group (azurerm_resource_group), using the vars from inputs to specify the name and location.

Vnet

# Virtual Network and subnet

resource "azurerm_virtual_network" "vnet" {

name = "tf-vnet"

address_space = ["10.0.0.0/16"]

location = var.location

resource_group_name = azurerm_resource_group.rg.name

}

resource "azurerm_subnet" "vnet" {

name = "tf-subnet"

resource_group_name = azurerm_resource_group.rg.name

virtual_network_name = azurerm_virtual_network.vnet.name

address_prefixes = ["10.0.1.0/24"]

service_endpoints = ["Microsoft.Sql"]

delegation {

name = "vnet-delegation"

service_delegation {

name = "Microsoft.Web/serverFarms"

actions = ["Microsoft.Network/virtualNetworks/subnets/action"]

}

}

}Add a virtual network (azurerm_virtual_network) and a subnet (azurerm_subnet). To make this available for SQL Database services, we add the "Microsoft.Sql" service_endpoints. To make it available for the web apps, we add a service_delegation.

Note that we are referencing values created earlier, like assigning the resource group name from azurerm_resource_group.rg.name.

SQL Server

# SQL Database

resource "azurerm_mssql_server" "db" {

name = "tf-dbsrv"

resource_group_name = azurerm_resource_group.rg.name

location = var.location

version = "12.0"

administrator_login = var.sql-login

administrator_login_password = var.sql-password

public_network_access_enabled = true

}

resource "azurerm_mssql_database" "db" {

name = "tf-db"

server_id = azurerm_mssql_server.db.id

collation = "SQL_Latin1_General_CP1_CI_AS"

read_scale = false

sku_name = "Basic"

storage_account_type = "GRS"

threat_detection_policy {

state = "Enabled"

email_addresses = [var.sql-threat-email]

}

}

resource "azurerm_mssql_server_transparent_data_encryption" "db" {

server_id = azurerm_mssql_server.db.id

}

resource "azurerm_mssql_virtual_network_rule" "db" {

name = "tf-db-vnet"

server_id = azurerm_mssql_server.db.id

subnet_id = azurerm_subnet.vnet.id

}Now I create the database service (azurerm_mssql_server) with a single database (azurerm_mssql_database). I'm using the smallest size for now, a $5/mo Basic database, so I don't have some of the redundancy and other advanced options to play with.

I did configure the threat detection policy and transparent data encryption (Service managed, I didn't want to mess with Customer managed for this), a couple of the other features that you get for free with the PaaS option. There are also resources available to setup security alerts, enable extended auditing, configure vulnerability assessment rule baselines, etc. but I don't expect to use those for this sample project.

Last, I configured a rule to allow access from the VNet that the Web App spans into, which will serve as a firewall rule so I don't have to leave the DB firewall open to all Azure resources.

App Services and Slots

# App Service Plan + Slots

resource "azurerm_app_service_plan" "asp" {

name = "tf-asp"

location = var.location

resource_group_name = azurerm_resource_group.rg.name

kind = "Windows"

reserved = false

sku {

tier = "Standard"

size = "S1"

}

}

resource "azurerm_app_service" "app" {

name = "tf-app-lobex"

location = var.location

resource_group_name = azurerm_resource_group.rg.name

app_service_plan_id = azurerm_app_service_plan.asp.id

https_only = true

site_config {

always_on = false

dotnet_framework_version = "v5.0"

http2_enabled = true

}

}

resource "azurerm_app_service_virtual_network_swift_connection" "app" {

app_service_id = azurerm_app_service.app.id

subnet_id = azurerm_subnet.vnet.id

}

resource "azurerm_app_service_slot" "app-staging" {

name = "staging"

app_service_name = azurerm_app_service.app.name

location = var.location

resource_group_name = azurerm_resource_group.rg.name

app_service_plan_id = azurerm_app_service_plan.asp.id

https_only = true

site_config {

always_on = false

dotnet_framework_version = "v5.0"

http2_enabled = true

websockets_enabled = false

}

}

resource "azurerm_app_service_slot_virtual_network_swift_connection" "app-staging" {

slot_name = azurerm_app_service_slot.app-staging.name

app_service_id = azurerm_app_service.app.id

subnet_id = azurerm_subnet.vnet.id

}I configured this to use a Standard S1 App Services Plan, to support the VNet (and out of habit), This is a $73/mo option, but provides 10 slots when we only need 2. We have headroom to run several other services and pick up some additional benefits for free auto-renewed SSL certs and so on.

Just as if we were creating this through the portal, we an App Service Plan (azurerm_app_service_plan) first, then can create the App Service (azurerm_app_service) and staging slot (azurerm_app_service_slot). Most of the settings should be clear, if you've done this through the portal or CLI before. However, some names are different and there are a couple magic values like reserved (false for Windows, true for Linux).

Finally, we configure VNet access for both the App (azurerm_app_service_virtual_network_swift_connection) and the Slot (azurerm_app_service_slot_virtual_network_swift_connection) independently.

Issues/Friction

Azure Rule Verifications

Azure will still verify that names match the requirements for the given service, that the SQL account name and password match relevant rules, etc. These will be returned as errors during a terraform apply, and the error messages I received when trying this were pretty clear.

Example:

Error: waiting on create/update future for SQL Server "tf-dbsrv" (Resource Group "tf-rg"):

Code="PasswordNotComplex" Message="Password validation failed. The password does not meet

policy requirements because it is not complex enough."Database Creation Hang

One worrisome issue I ran into was a hang while creating the database on one run. Terraform was waiting for the database to finish being created, with a progress message every N seconds, but I could see in the Azure portal the DB had been created successfully. I ended up killing the terraform apply. This left terraform's state unaware the DB already existed, so it couldn't continue (the resource can't be created because it already exists). I used terraform import to add the database to the state, which allowed the next terraform apply to pick up and continue, but it worries me what this says about how the Azure RM module is operating.

Extra Properties in Azure

One of the more annoying things I keep running into is that after I run a terraform apply for new resources, the next terraform plan call will often return a list of changes for things like empty tag lists. I've also run into a case where I think my deploy script is causing Azure to reconfigure a couple properties on the Web App when it's first run, resulting in differences from my terraform config.

On the first one, I'm not sure why the preserved state from running the config doesn't match the queried state during the next terraform plan call. This feels broken and I've taken to running a terraform apply followed immediately by terraform apply -refresh-only , which also feels questionable.

External Resource Changes

Another long post on the backburner is a comparison of configuration management tactics for these types of applications. Terraform very explicitly wants you to manage resources only through Terraform, but that puts some constraints on strategies for where we manage configurations as well as what lifecycle to use (immediate vs reboot updates, direct to production vs blue/green, etc.). That may not be a bad thing, I have a long set of notes to start organizing my thinking on coniguration management in these types of applications that I'm still organizing.

I don't have conclusions on this yet, but I recognized a number of friction points along the way that I'm giong to want to explore further.